Alle Posts

Veröffentlicht am

21.2.2025

Personalentwicklung messen: Effektive Evaluationsmethoden & KPIs

Kategorie:

Learning Hub

Lesezeit

10

Minuten

Veröffentlicht am

Kategorie:

Lesezeit

10

Wenn es darum geht, die Effektivität von Schulungen zu messen, vernachlässigen viele Unternehmen den Prozess der Evaluierung, obwohl er wahrscheinlich der wichtigste Schritt ist. Im Allgemeinen bedeutet Evaluation im Zusammenhang mit Lernen, dass du alle relevanten Informationen sammelst, um zu entscheiden, ob dein Training effektiv ist und das Geld, die Zeit und den Aufwand wert war. Heute geben wir dir einen kurzen Überblick über die klassischen Evaluationsrahmen in der L&D-Branche. "Klassisch" bedeutet nicht, dass es sich um altmodische oder veraltete Techniken handelt. Tatsächlich sind dies die am weitesten verbreiteten Methoden, um die Effektivität der Ausbildung in deinem Unternehmen zu bewerten.

Die Messung der Wirksamkeit von Schulungen ist entscheidend, um zu verstehen, wie gut ein Schulungsprogramm das Wissen, die Fähigkeiten, die Leistung und die Kapitalrendite (ROI) der Mitarbeitenden beeinflusst. Um die Effektivität eines Schulungsprogramms bestimmen zu können, sollten die Ziele der Schulung klar definiert werden, bevor die Schulung durchgeführt wird. Diese klare Zielsetzung ermöglicht es, die Auswirkungen der Schulung genau zu messen und dient als Grundlage für zukünftige Schulungsentscheidungen, wie die Fortsetzung ähnlicher Schulungsmethoden oder die Anpassung des Ansatzes.

Um die Effektivität der Personalentwicklung zu messen, ist es wichtig, spezifische Key Performance Indicators (KPIs) zu definieren und zu überwachen. Diese KPIs dienen als Maßstab für den Erfolg von Schulungsmaßnahmen und ermöglichen es Unternehmen, den Wert ihrer Investitionen in die Weiterentwicklung ihrer Mitarbeiter genau zu bewerten. Zu den KPIs, die oft genutzt werden, gehören:

Die Messung der Effektivität von Schulungsprogrammen kann durch verschiedene bewährte Methoden erfolgen. Hier sind die am häufigsten verwendeten Ansätze:

Eine der bekanntesten Methoden zur Analyse und Bewertung von Trainingsprogrammen ist das von Dr. Donald Kirkpatrick (1924 - 2014) in den 1950er Jahren entwickelte Modell. Seit seiner Entstehung hat das Kirkpatrick-Modell zahlreiche Erweiterungen erfahren und gilt heute als eines der renommiertesten Modelle zur Bewertung von Trainingsprogrammen. Es kann vor, während und nach dem Training angewendet werden, um den Wert des Trainings für das Unternehmen zu verdeutlichen. Das Modell postuliert, dass eine Evaluation nur dann einen Mehrwert bringt, wenn alle vier Bewertungsebenen berücksichtigt werden, da sie den Prozess darstellen, den ein Schulungsteilnehmer durchläuft.

Die vier Bewertungsebenen des Kirkpatrick-Modells sind:

Es ist wichtig, das Feedback kontinuierlich zu sammeln und zu überprüfen, um schnell auf etwaige Probleme reagieren zu können. Kirkpatrick empfiehlt außerdem, auf jeder Ebene Vergleichsgruppen einzusetzen, um einen aussagekräftigen Vergleich zu ermöglichen. So kann die Wirksamkeit des Trainings präzise beurteilt und gegebenenfalls verbessert werden.

Ein weiterer wertvoller Ansatz zur Bewertung der Wirksamkeit von Personalentwicklungsmaßnahmen ist die Success Case Method (SCM) von Brinkerhoff. Im Gegensatz zu rein quantitativen Ansätzen konzentriert sich diese Methode auf qualitative Analysen einer kleinen Gruppe von Teilnehmenden. Ursprünglich entwickelt von Robert Brinkerhoff, zielt die SCM darauf ab, sowohl die erfolgreichsten als auch die am wenigsten erfolgreichen Fälle innerhalb eines Schulungsprogramms zu identifizieren und von diesen zu lernen.

Die SCM hilft, zwei grundlegende Fragen zu beantworten: Wie gut funktioniert ein Trainingsprogramm unter optimalen Bedingungen? Und wenn es nicht funktioniert, was sind die Gründe dafür?

Der Bewertungsprozess umfasst folgende Schritte:

Obwohl die Durchführung der SCM Zeit und Ressourcen erfordert, können die Ergebnisse erhebliche Einblicke liefern. Es ist jedoch wichtig zu beachten, dass diese Methode in Ergänzung zu quantitativen Analysen verwendet werden sollte, um ein umfassendes Bild der Wirksamkeit von Schulungsprogrammen zu erhalten.

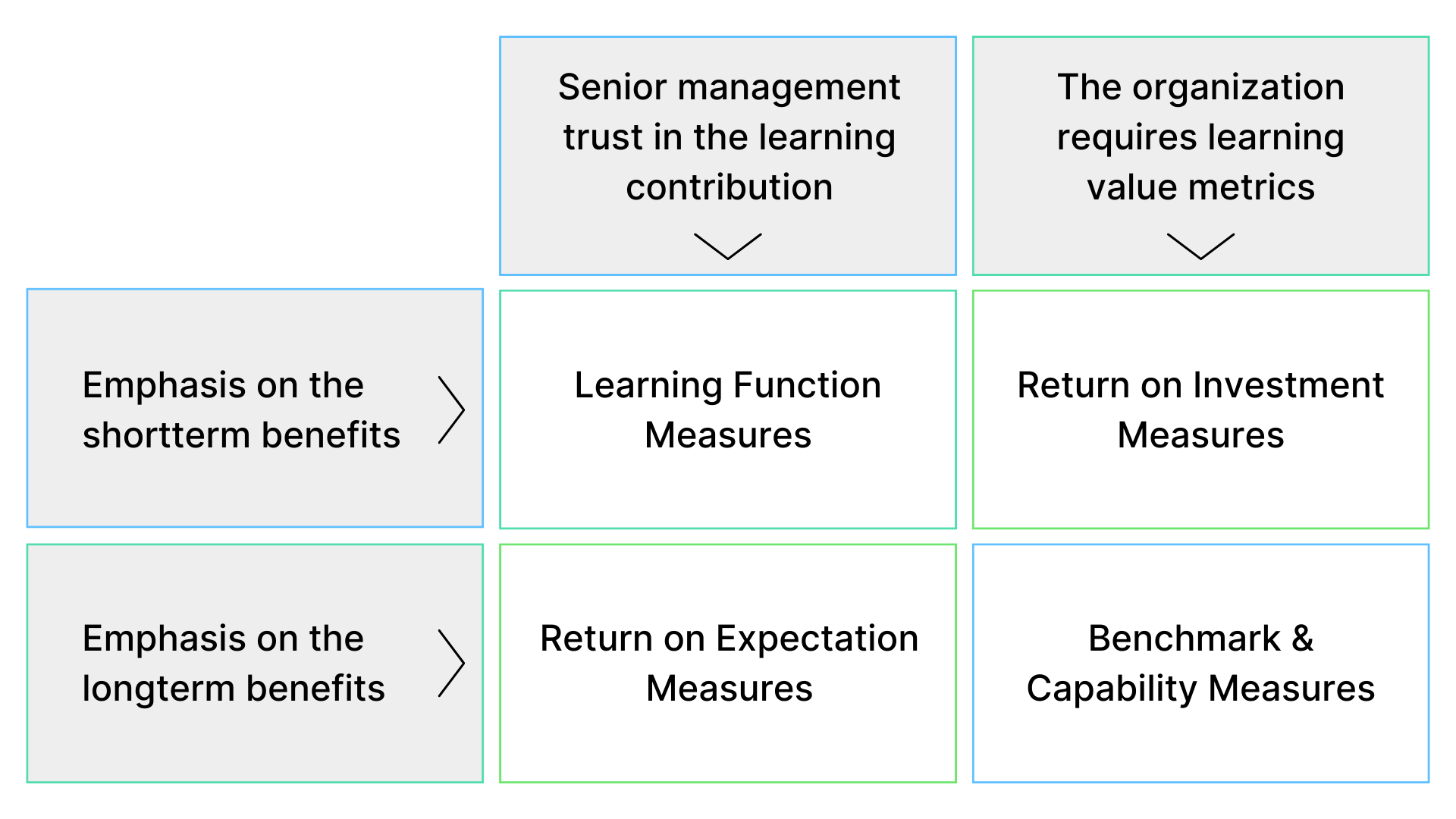

Das Anderson-Modell der Lernevaluation, erstmals 2006 vom Chartered Institute of Personnel and Development (CIPD) veröffentlicht, bietet einen dreistufigen Evaluierungszyklus, der darauf abzielt, die Ziele eines Schulungsprogramms mit den strategischen Prioritäten eines Unternehmens in Einklang zu bringen. Im Gegensatz zu anderen Ansätzen konzentriert sich dieses Modell weniger auf die Bewertung einzelner Programmergebnisse, sondern vielmehr auf die Bewertung der Lernstrategie als Ganzes.

Der dreistufige Zyklus des Anderson-Modells umfasst:

Da das Anderson-Modell ein umfassendes Rahmenwerk darstellt, wird empfohlen, es in Kombination mit anderen Modellen wie dem Kirkpatrick-Modell einzusetzen, um einen ganzheitlichen Blick auf den Wert des Lernprogramms für das Unternehmen zu erhalten. Durch die Verwendung verschiedener Bewertungsansätze können Unternehmen ein detailliertes Verständnis der Auswirkungen ihrer Lernstrategien erlangen und diese entsprechend anpassen, um die strategischen Ziele effektiv zu unterstützen.

In der heutigen Geschäftswelt werden alle wirtschaftlichen Aktivitäten sorgfältig überwacht, besonders die Ausgaben. Unternehmen investieren jährlich erhebliche Summen in die Ausbildung ihrer Mitarbeiter*innen. Deshalb ist es entscheidend zu wissen, wie wertvoll diese Investitionen wirklich sind. Einfache Vor- und Nachtests reichen nicht mehr aus, um den Erfolg von Schulungsmaßnahmen zu bewerten. Moderne Technologien und neue Evaluierungsansätze ermöglichen es uns, Lernprozesse effizienter zu gestalten und den tatsächlichen ROI der Personalentwicklung nachzuweisen.

Mit digitalen Lernmethoden können wir individuelle Lernergebnisse genau verfolgen, sofortige Verbesserungen erkennen und gleichzeitig ein hochgradig personalisiertes Schulungsprogramm für jeden Mitarbeiter anbieten. Du musst dich dabei nicht auf eine einzige Methode zur Bewertung der Lerneffektivität beschränken. Es ist ratsam, mehrere Methoden zu kombinieren, um ein umfassendes Bild der Auswirkungen deiner Schulungsprogramme zu erhalten. Obwohl nicht alle Ansätze zum gleichen Ergebnis führen müssen, sollten sie zumindest konsistente Schlussfolgerungen und Empfehlungen bieten.

Für eine detaillierte Diskussion darüber, wie du den ROI deiner Personalentwicklungsmaßnahmen bestimmen kannst, besuche auch unseren Founder Talk, der weitere Einblicke und praktische Tipps bietet.